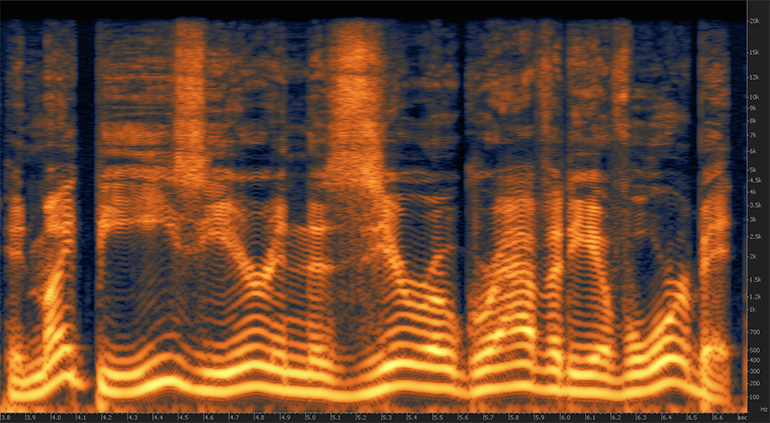

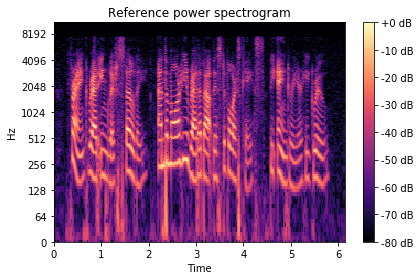

Now the audio file is represented as a two dimensional spectrogram image:

Plt.imshow(melspectogram_db.squeeze().numpy(), cmap='hot') Melspectogram_db=melspectogram_db_transform(melspectogram) Plt.imshow(melspectogram.squeeze().numpy(), cmap='hot') Melspectogram = melspectogram_transform(audio_mono) Sample_rate=fixed_sample_rate, n_mels=128) So let’s use torchaudio transforms and add the following lines to our snippet: melspectogram_transform = The mel scale converts the frequencies so that equal distances in pitch sounded equally distant to a human listener. A mel spectrogram is a spectrogram where the frequencies are converted to the mel scale, which takes into account the fact that humans are better at detecting differences in lower frequencies than higher frequencies. For that purpose we will use a log-scaled mel-spectrogram. We will do that by converting it into a spectogram, which is a visual representation of the spectrum of frequencies of a signal as it varies with time. Now it is time to transform this time-series signal into the image domain. The resulted matplotlib plots looks like this:Īudio signal time series from the YESNO dataset Orig_freq=sample_rate, new_freq=fixed_sample_rate)Īudio_mono = an(resample_transform(audio), The code for such preprocessing, looks like this: yesno_data = ('./data', download=True)Īudio, sample_rate, labels = yesno_data Create a mono audio signal – For simplicity, we will make sure all signals we use will have the same number of channels.In our example we will use this sample rate. As such, the sampling rate of 20050 Hz has been reasonably popular for low bitrate MP3 files. However, in many cases removing the higher frequencies is considered plausible for the sake of reducing the amount of data per audio file. As 20 kHz is the highest frequency generally audible by humans, sampling rate of 44100 Hz is considered the most popular choice. Theoretically the maximum frequency that can be represented by a sampled signal is a little bit less than half the sample rate (known as the Nyquist frequency). Resample the audio signal to a fixed sample rate – This will make sure that all signals we will use will have the same sample rate.Īs a first stage of preprocessing we will: The following preprocessing was done using this script on the YesNo dataset that is included in torchaudio built-in datasets. As such, if we will be able to transfer audio classification tasks into the image domain, we will be able to leverage this rich variety of backbones for our needs.Īs mentioned before, instead of directly using the sound file as an amplitude vs time signal we wish to convert the audio signal into an image. These CNNs achieve state of the art results on image classification tasks and offer a variety of ready to use pre trained backbones. In recent years, Convolutional Neural Networks (CNNs) have proven very effective in image classification tasks, which gave rise to the design of various architectures, such as Inception, ResNet, ResNext, Mobilenet and more. Audio Classification with Convolutional Neural Networks In this blog post we will show how using Torchaudio and ClearML enables simple and efficient audio classification. The previous blog posts focused on image classification and hyperparameters optimization. This blog post is a third of a series on how to leverage PyTorch’s ecosystem tools to easily jumpstart your ML/DL project. In this blog post we will demonstrate such an example by using the popular method of converting the audio signal into the frequency domain. Though audio signals are temporal in nature, in many cases it is possible to leverage recent advancements in the field of image classification and use popular high performing convolutional neural networks for audio classification. As such, there is an increasing interest in audio classification for various scenarios, from fire alarm detection for hearing impaired people, through engine sound analysis for maintenance purposes, to baby monitoring. Audio classification with torchaudio and ClearMLĪudio signals are all around us. It is reprinted here with the permission of ClearML. This blog post was originally published at ClearML’s website.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed